Link to video: 藝文薈澳_澳門點雲花園 Art Macao_Macao Pointcloud Garden | 藝術家 Artist: Clement Valla

2023, Former Iec Long Firecracker Factory, Macau

Macau Pointcloud Garden Artist Speech

The Macau Pointcloud Gardens are a series of 2 virtual gardens I created based on 3d scans of Macau’s gardens and natural spaces.

In many ways technology, and especially screen based technologies distance us from nature. Spending time on screen can be seen as a diametrically opposed way of engaging the world than being immersed in close observation of nature. My work proposes ways in which technology might actually bring us closer to nature. After all, technology has enabled humans to understand the natural world in completely new ways, and this new understanding has brought with it new poetics.

My work also explores the gap between human perception and machine vision. For this project I used pointclouds, which are the building block of 3d scanning technologies. Point clouds are a way for computers to transform the visual world into data points. I wanted to let humans experience those data points to elicit emotional and bodily responses to this form of technological vision. It is the differences, the gaps between the human and machine that can elicit new aesthetic experiences. I want to celebrate those gaps, and celebrate the entanglement of human and machine as a way to expand poetic possibility.

The Wind Garden

The Wind garden is a collection of pointcloud scans from all over Macau, brought together into a single cohesive garden.

In July, I visited as many of Macau’s green spaces as possible, took thousands of photographs, and made 3d scans of what I encountered there. These 3D scans served as raw material to collage and compose together a virtual garden that represents Macau.

2 virtual cameras slowly walk through the garden side by side, but each sees things slightly differently, from a unique vantage, like 2 friends or lovers taking a stroll.

The Japanese word Hamorebi means the light filtering through the leaves. It is a deeply soothing sensation we are all familiar with. By focusing on how the points move across the screen, and through their slow shimmering motion I have tried to capture the sensation of ‘hamorebi’ in the gardens.

The Transparent Garden

The transparent garden focuses on the former Iec Long Firecracker Factory and the wetlands next door. The location of the screen displaying this pointcloud garden was chosen to capture the beautiful mix of nature and architecture in Iec Long. I used a transparent screen to blend this garden with the existing site.

I have been working with 3d scanning technologies for over a decade, but my work has focused on nature. However this site made me introduce scans of architecture into my work. This site makes distinction between the natural and the man-made very difficult, and demonstrates new ways of thinking of rambunctious gardens that don’t make neat distinctions between artificial and natural. This is the spirit I tried to capture as I recreated my own version of this garden.

custom wallpaper, custom software, 8 flatscreen monitors

2022,

Other Voices, Other Rooms

Artist: Clement Valla

Curator: Hasan Bülent Kahraman

Clement Valla’s work presents us with objects, moments, situations that we think we see or that we have never seen. Human consciousness is limited within the confines of the microscope and the telescope. Both devices show us what we ‘don’t see’. Objects that are too small and too close for us to see, as well as those that are too big and too far for us to see, determine not only the realm of our consciousness but also our imagination. And those we see, also have dimensions that we do not see. By making use of the potentials of digital art, Valla adds new dimensions to nature, which we look at every day, we think we see, and believe to be in its right place and stable, yet has an aspect that always remains obscure and mysterious. He drags nature, with a dimension that is imperceptible to us, to the limits of our perception of reality. Thus, he not only establishes an issue concerning vision, but steers towards the subject matter of transformation. Time is transformation. Being able to see transformation is to transcend to a time beyond. Thus, we come across the uncanniness of the aspect of nature that we have always considered to be curative.

As the temporal axes of visuality and the transformation in nature turn the interior space into exterior and the exterior space into interior, Valla turns the real into a marvel of reality.

Is the image always illusive (an illusion)?

Wallpaper, UV print on dibond, custom software, flatscreen monitors

2021,

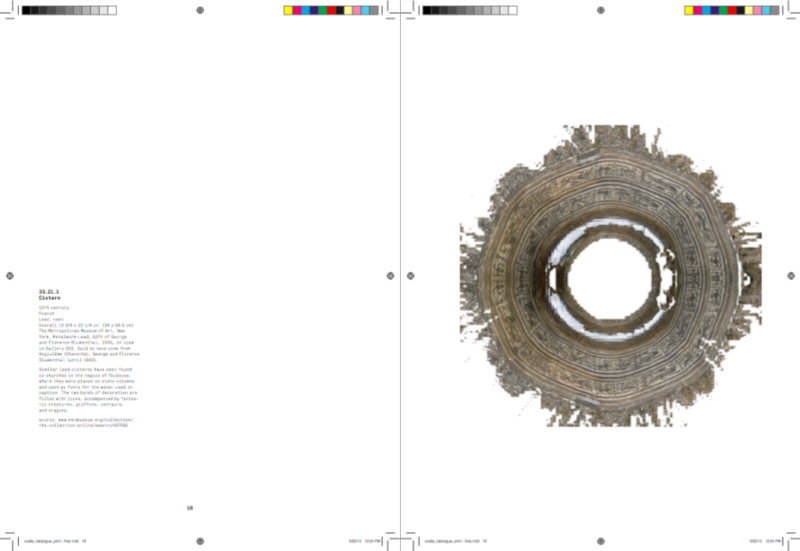

pointcloud.garden is a collection of 3D scanned gardens, each consist of large sets of data points measured from gardens in a 3D scanning process. Each data point consists of spatial [XYZ] and color [RGB] information. The resulting data set is a discontinuous translation of a surface into discrete data points, filled with gaps and missing information. This compressed translation emphasizes certain ways in which humans experience a garden; as an aggregation of leaves, petals, stalks and stems, a set of discontinuous points forming an overall texture.

Overgrowth is a series of 3d scans by Clement Valla. The artworks depict unruly yards and lawns left to re-grow and re-wild. Each unique artwork features what could happen when we step back and let exuberant nature be, with varying flowers, grasses and plant life. There are 1000 unique NFTs in the collection.

pointcloud.garden is a collection of 3d scanned gardens, each presented as raw point data.

Please visit the project here.

2019, Embedded Parables, bitforms galery, November 14, 2019–January 19, 2020

Inkjet on cotton over CNC milled foam sculpture

2018, Super Dutchess Gallery

“Mars was empty before we came. That’s not to say that nothing had ever happened. The planet had accreted, melted, roiled and cooled, leaving a surface scarred by enormous geological features: craters, canyons, volcanoes. But all of that happened in mineral unconsciousness, and unobserved. There were no witnesses–except for us, looking from the planet next door, and that only in the last moment of its long history. We are all the consciousness that Mars has ever had.” -Kim Stanley Robinson, Red Mars

There are forces at work that shape natural forms, and forces at work that shape digital images — and humans have their part in both. These objects, at once images and sculptures, are digital copies of natural objects, minimally touched by human decision. They embody large forces at work that operate invisibly but with great effect.

Each Torus (flat tire) is a 1:1 scale print of a tire. To produce the image Valla synthesizes a 3D model from hundreds of photographs of a tire in his studio. Using physics simulation software, the artist throws a simulated linen cloth at the tire. Once the linen comes to rest, the tire model is imprinted onto the linen that drapes it – much like a full color digital rubbing.

Inkjet on linen

2016, Providence College Galleries, Providence RI

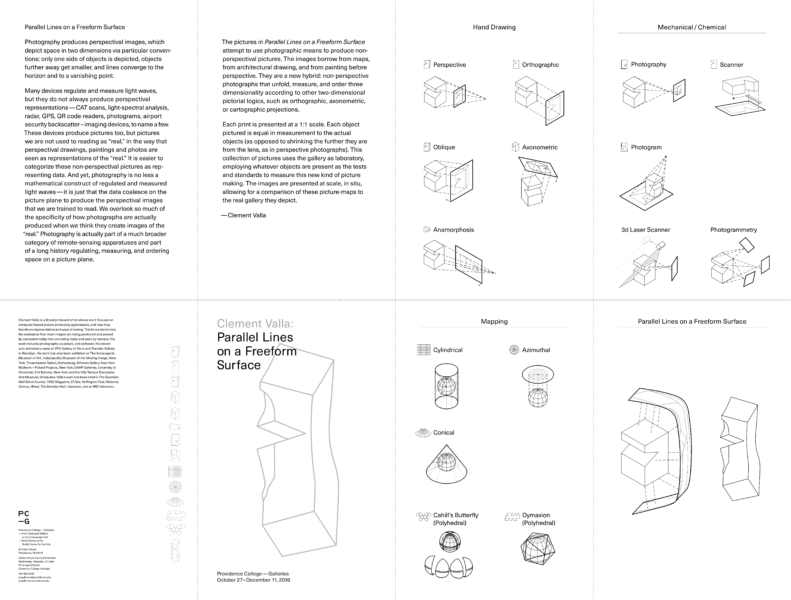

Photography produces perspectival images, which depict space in two dimensions via particular conventions: only one side of objects is depicted, objects further away get smaller, and lines converge to the horizon and to a vanishing point.

Many devices regulate and measure light waves, but they do not always produce perspectival representations—CAT scans, light-spectral analysis, radar, GPS, QR code readers, photograms, airport security backscatter–imaging devices, to name a few. These devices produce pictures too, but pictures we are not used to reading as “real,” in the way that perspectival drawings, paintings and photos are seen as representations of the “real.” It is easier to categorize these non-perspectival pictures as representing data. And yet, photography is no less a mathematical construct of regulated and measured light waves—it is just that the data coalesce on the picture plane to produce the perspectival images that we are trained to read. We overlook so much of the specificity of how photographs are actually produced when we think they create images of the “real.” Photography is actually part of a much broader category of remote-sensing apparatuses and part of a long history regulating, measuring, and ordering space on a picture plane.

The pictures in Parallel Lines on a Freeform Surface attempt to use photographic means to produce nonperspectival pictures. The images borrow from maps, from architectural drawing, and from painting before perspective. They are a new hybrid: non-perspective photographs that unfold, measure, and order three dimensionality according to other two-dimensional pictorial logics, such as orthographic, axonometric, or cartographic projections.

Each print is presented at a 1:1 scale. Each object pictured is equal in measurement to the actual objects (as opposed to shrinking the further they are from the lens, as in perspective photographs). This collection of pictures uses the gallery as laboratory, employing whatever objects are present as the tests and standards to measure this new kind of picture making. The images are presented at scale, in situ, allowing for a comparison of these picture-maps to the real gallery they depict.

Inkjet on belgian linen over CNC milled foam sculptures

2015, XPO Gallery, Paris France

Download the Catalog for Surface Proxy

Surface Proxy is a show of picture surrogates, inkjet prints on linen wrapped around CNC milled foam sculptures.

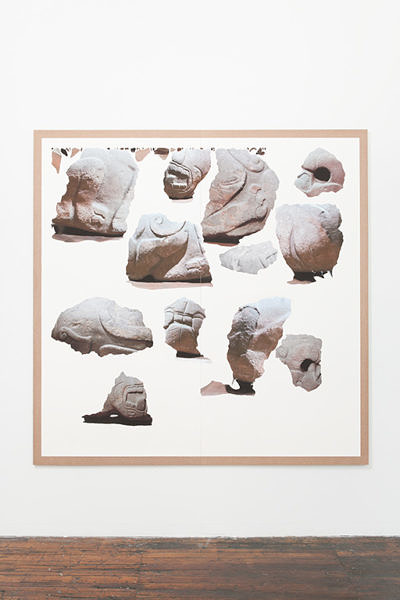

Valla begins with Medieval French architectural and sculptural fragments that have made their way into the collections of museums in New York and Providence, where the artist lives and works. The objects at the various institutions are chosen because they bear visible scars of their transformation from fixed architectural ornaments to stand-alone sculptural works.

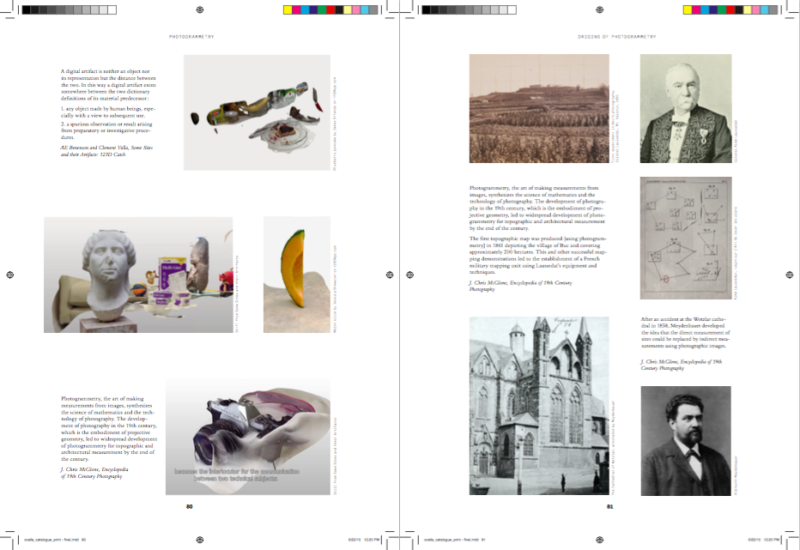

In order to reproduce the fragments Valla uses a process known as photogrammetry: objects are photographed from multiple angles and a 3d model of the objects is produced by triangulating the multiple images.

The origins of photogrammetry are intrinsically linked with the medieval content. The technique was invented by a French mathematician in 1849 and perfected in the later half of the 19th century by Albrecht Meydenbauer, a Prussian architect tasked with surveying historical monuments on the verge of complete decay. A near fatal fall from a gothic cathedral convinces Meydenbauer to employ the ‘magic scaffold’ of photogrammetry – to measure and model from a distance. Contemporary photogrammetry software has made this once specialized scientific process widely accessible.

The 3d models produced by photogrammetry are images in a quite literal sense – extracted from optical technologies, they are formed on the computer screen by wrapping and folding flat pictures around hollow 3d volumes. The promise of 3d scanning and manufacturing is that we will be able to reproduce objects. The truth is that we get 3d images. Valla emphasises the increasing confusion between image and objects in these works.

photos by Vinciane Verguethen

Inkjet on belgian linen over CNC milled foam sculptures

2014, XPO Gallery, Paris, France

Wrapped terracotta neck-amphora (storage jar)

Attributed to the New York Nettos Painter

Metropolitan Museum of Art

Period: Proto-Attic

Date: second quarter of the 7th century B.C.

Culture: Greek, Attic

Medium: Terracotta

Dimensions: H. 42 3/4 in. (108.6 cm); diameter 22 in. (55.9 cm)

Classification: Vases

Credit Line: Rogers Fund, 1911

Accession Number: 11.210.1

Wrapped Terracotta neck-amphora (storage jar) is a sculpture that is neither an object nor an image. Valla used digital 3D capture to reproduce a 7th Century BC Greek amphora on view at the Metropolitan Museum of Art. To highlight the artifacts produced by computer vision the completed reproduction is broken down into its constituent parts: a flat image wrapped around a 3D volume. The resulting sculpture echoes the packing of objects for storage and shipping, transitory moments when only an object’s image is accessible. The image ends up serving as surrogate for the sculpture underneath.

3D Reproductions of objects from the Metropolitan Museum of Art, Inkjet on paper mounted on MDF, sintered nylon on laser-cut MDF tables

2014, Transfer Gallery, Brooklyn

Of the moral effect of the monuments themselves, standing as they do in the depths of a tropical forest, silent and solemn, strange in design, excellent in sculpture, rich in ornament, different from the works of any other people, their uses and purposes and whole history so entirely unknown with hieroglyphics explaining all, but being perfectly unintelligible, I shall not pretend to convey any idea. Often the imagination was pained in gazing at them.

— John Lloyd Stephens, Incidents of Travel in Central America, Chiapas, and Yucatan, 1841

This week, Twitterers around the world received some devastating news: The Twitter account @Horse_ebooks, a cult favorite, was human after all.

— Bianca Bosker, Twitter Hoax Reveals What We Desire Most From Machines, Huffington Post, Sept. 26, 2013

Surface Survey is comprised of digital prints and 3D printed sculptures, structured around concepts of archaeology, computer software, meaning-making, and images that are not meant for human consumption.

To explore these themes, Valla collects digital artifacts produced by software that turns photographs into 3D Models. The arranged fragments are left untouched, exhibiting the software’s process as-is. The work is comprised of both 2D images meant to be processed by the computer (but never seen by humans) and 3D printed fragments that indicate how the software pieces the shapes together.

Valla ‘s subjects are varied: from sculptural antiquities he photographed in the Metropolitan Museum’s collections, to contemporary ephemera, to 19th Century inventions. The work uncovers subtle shapes and textures that illustrate these objects in unexpected ways and cast a new light the algorithms that digitized them.

Valla’s work reflects on the human potential of meaning-making in unfamiliar, software-created images. He is interested in the relationship between how what a computer reads is so distant from what a human will understand. This interest extends into the language of computer image-making, suggesting an archaeology of computer software, whose extractions reveal the computer’s systematic logic.

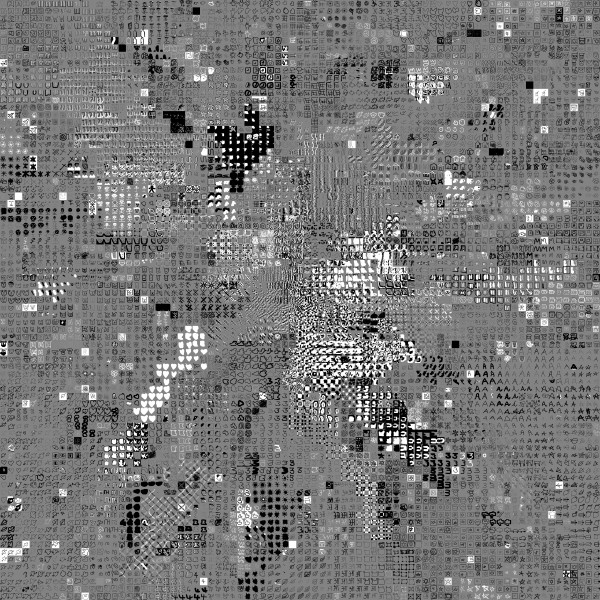

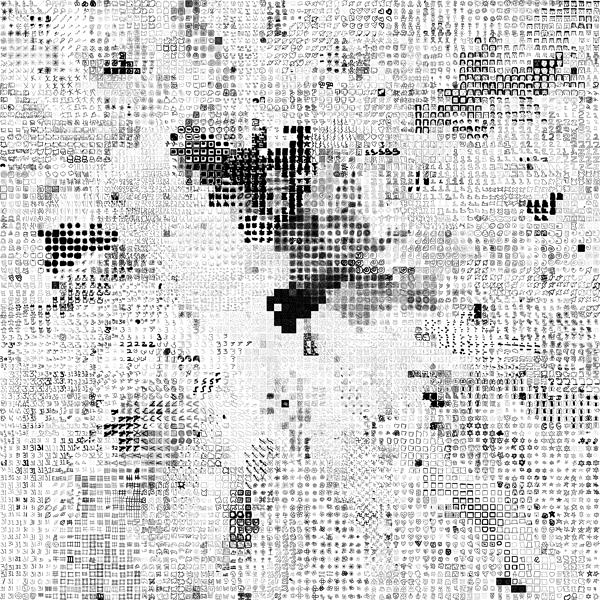

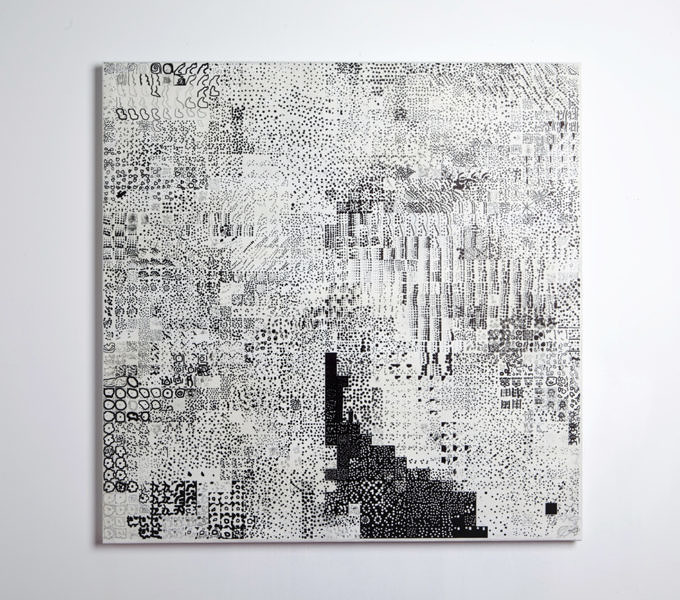

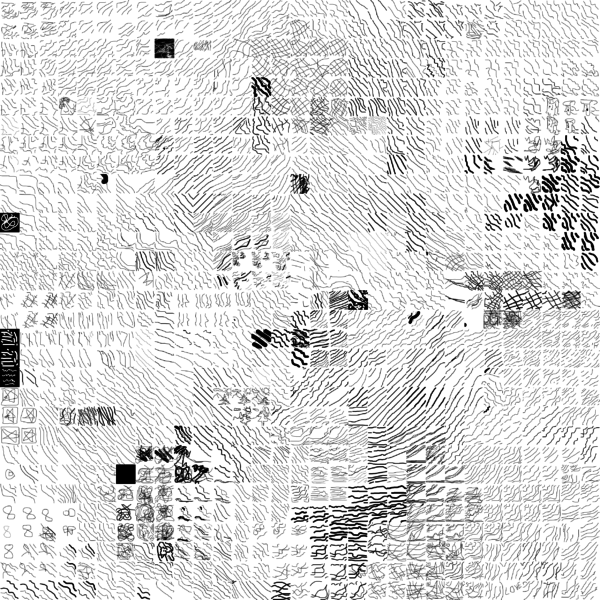

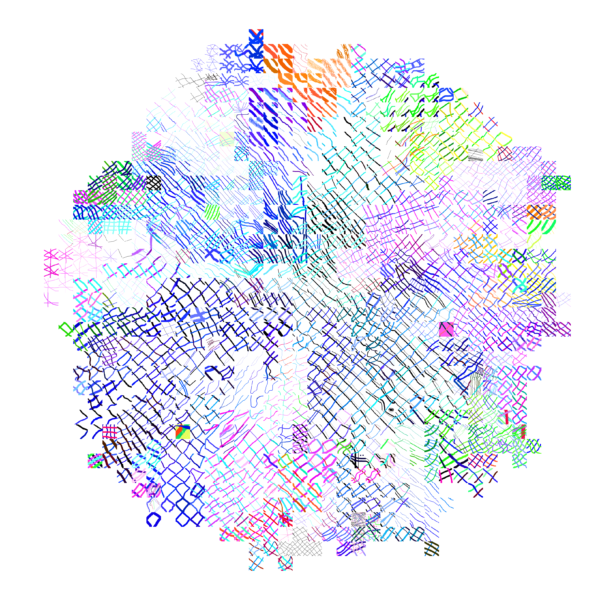

Drawings by thousands of individuals, custom software, inkjet on paper

2011-Ongoing, Dorsch Gallery, Miami, FL, School of Visual Arts, New York, NY, Brown University, Providence, RI, Indianapolis Museum of Contemporary Art, Indianapolis, IN

The Seed Drawings are a set of drawings that are repeated copies, much like a drawing-version of the game ‘Telephone’, and produced by online workers. The process begins with one simple drawing (the ‘Seed’) and each worker copies the previous drawing. The completed drawings track the evolution of the copies over time, as well as the ruptures produced by those workers who ignore the instructions and change the drawings. The workers are culled through the online service of Amazon.com’s Mechanical Turk, a pre-existing system that offers workers pay in reward for specified tasks. For the Seed Drawings, each worker is paid 5¢ to copy the previous worker’s drawing— a paltry sum for a menial task. The Seed Drawings explore two aspects of contemporary networks: the online proliferation of copies and repeated memes, and the spread of cheap, crowdsourced micro-labor.

Inkjet on Canvas

2012-Ongoing, 319 Scholes, New York, NY, DAAP Galleries, University of Cincinnati, Cincinnati, OH, Bitforms Gallery, New York, NY, ASC Projects, San Francisco, CA, Kunsthalle zu Kiel, Kiel, Germany, University of Massachusetts, Amherst, MA, University at Buffalo, Buffalo, NY

Note: This article was originally published on rhizome.org.

These artists (…) counter the database, understood as a structure of dehumanized power, with the collection, as a form of idiosyncratic, unsystematic, and human memory. They collect what interests them, whatever they feel can and should be included in a meaning system. They describe, critique, and finally challenge the dynamics of the database, forcing it to evolve.1

I collect Google Earth images. I discovered them by accident, these particularly strange snapshots, where the illusion of a seamless and accurate representation of the Earth’s surface seems to break down. I was Google Earth-ing, when I noticed that a striking number of buildings looked like they were upside down. I could tell there were two competing visual inputs here —the 3D model that formed the surface of the earth, and the mapping of the aerial photography; they didn’t match up. Depth cues in the aerial photographs, like shadows and lighting, were not aligning with the depth cues of the 3D model.

The competing visual inputs I had noticed produced some exceptional imagery, and I began to find more and start a collection. At first, I thought they were glitches, or errors in the algorithm, but looking closer, I realized the situation was actually more interesting — these images are not glitches. They are the absolute logical result of the system. They are an edge condition—an anomaly within the system, a nonstandard, an outlier, even, but not an error. These jarring moments expose how Google Earth works, focusing our attention on the software. They are seams which reveal a new model of seeing and of representing our world – as dynamic, ever-changing data from a myriad of different sources – endlessly combined, constantly updated, creating a seamless illusion.

3D Images like those in Google Earth are generated through a process called texture mapping. Texture mapping is a technology developed by Ed Catmull in the 1970’s. In 3D modeling, a texture map is a flat image that gets applied to the surface of a 3D model, like a label on a can or a bottle of soda. Textures typically represent a flat expanse with very little depth of field, meant to mimic surface properties of an object. Textures are more like a scan than a photograph. The surface represented in a texture coincides with the surface of the picture plane, unlike a photograph that represents a space beyond the picture plane. This difference might be summed up another way: we see through a photograph, welook at a texture. This is an important distinction in 3D modeling, because textures are stretched across the surface of a 3D model, in essence becoming the skin for the model.

Google Earth’s textures however, are not shallow or flat. They are photographs that we look through into a space represented beyond—a space our brain interprets as having three dimensions and depth. We see space in the aerial photographs because of light and shadows and because of our prior knowledge of experienced space. When these photographs get distorted and stretched across the 3D topography of the earth, we are both looking at the distorted picture plane, andthrough the same picture plane at the space depicted in the texture. In other words, we are looking at two spaces simultaneously. Most of the time this doubling of spaces in Google Earth goes unnoticed, but sometimes the two spaces are so different, that things look strange, vertiginous, or plain wrong. But they’re not wrong. They reveal Google’s system used to map the earth — The Universal Texture.

The Universal Texture is a Google patent for mapping textures onto a 3D model of the entire globe.2 At its core the Universal Texture is just an optimal way to generate a texture map of the earth. As its name implies, the Universal Texture promises a god-like (or drone-like) uninterrupted navigation of our planet — not a tiled series of discrete maps, but a flowing and fluid experience. This experience is so different, so much more seamless than previous technologies, that it is an achievement quite like what the escalator did to shopping:

No invention has had the importance for and impact on shopping as the escalator. As opposed to the elevator, which is limited in terms of the numbers it can transport between different floors and which through its very mechanism insists on division, the escalator accommodates and combines any flow, efficiently creates fluid transitions between one level and another, and even blurs the distinction between separate levels and individual spaces.3

In the digital media world, this fluid continuity is analogous to the infinite scroll’s effect on Tumblr. In Google Earth, the Universal Texture delivers a smooth, complete and easily accessible knowledge of the planet’s surface. The Universal Texture is able to take a giant photo collage made up of aerial photographs from all kinds of different sources — various companies, governments, mapping institutes — and map it onto a three-dimensional model assembled from as many distinct sources. It blends these disparate data together into a seamless space – like the escalator merges floors in a shopping mall.

Our mechanical processes for creating images have habituated us into thinking in terms of snapshots – discrete segments of time and space (no matter how close together those discrete segments get, we still count in frames per second and image aspect ratios). But Google is thinking in continuity. The images produced by Google Earth are quite unlike a photograph that bears an indexical relationship to a given space at a given time. Rather, they are hybrid images, a patchwork of two-dimensional photographic data and three-dimensional topographic data extracted from a slew of sources, data-mined, pre-processed, blended and merged in real-time. Google Earth is essentially a database disguised as a photographic representation.

It is an automated, statistical, incessant, universal representation that selectively chooses its data. (For one, there is no ‘night’ in Google’s version of Earth.) The system edits a particular representation of the world. The software edits, re-assembles, processes and packages reality in order to form a very specific and useful model. These collected images feel alien, because they are clearly an incorrect representation of the earth’s surface. And it is precisely because humans did not directly create these images that they are so fascinating. They are created by an algorithm that finds nothing wrong in these moments. They are less a creation, than a kind of fact – a representation of the laws of the Universal Texture. As a collection the anomalies are a weird natural history of Google Earth’s software. They are strange new typologies, representative of a particular digital process. Typically, the illusion the Universal Texture creates makes the process itself go unnoticed, but these anomalies offer a glimpse into the data collection and assembly. They bring the diverging data sources to light. In these anomalies we understand there are competing inputs, competing data sources and discrepancy in the data. The world is not so fluid after all.

By capturing screenshots of these images in Google Earth, I am pausing them and pulling them out of the update cycle. I capture these images to archive them – to make sure there is a record that this image was produced by the Universal Texture at a particular time and place. As I kept looking for more anomalies, and revisiting anomalies I had already discovered, I noticed the images I had discovered were disappearing. The aerial photographs were getting updated, becoming ‘flatter’ – from being taken at less of an angle or having the shadows below bridges muted. Because Google Earth is constantly updating its algorithms and three-dimensional data, each specific moment could only be captured as a still image. I know Google is trying to fix some of these anomalies too – I’ve been contacted by a Google engineer who has come up with a clever fix for the problem of drooping roads and bridges. Though the change has yet to appear in the software, it’s only a matter of time.

Taking a closer look, Google’s algorithms also seem to have a way to select certain types of aerial photographs over others, so as more photographs are taken, the better ones get selected. To Google, better photographs are flatter, have fewer shadows and are taken from higher angles. Because of this progress, these strange images are being erased. I see part of my work as archiving these temporal digital typologies. I also call these images postcards to cast myself as a tourist in the temporal and virtual space – a space that exists digitally for a moment, and may perhaps never be reconstituted again by any computer.

Nothing draws more attention to the temporality of these images than the simple observation that the clouds are disappearing from Google Earth. After all, clouds obscures the surface of the planet so photos with no clouds are privileged. The Universal Texture and its attendant database algorithms are trained on a few basic qualitative traits – no clouds, high contrast, shallow depth, daylight photos. Progress in architecture has given us total control over interior environments; climate controlled spaces smoothly connected by escalators in shopping malls, airports, hotels and casinos. Progress in the Universal Texture promises to give us a smooth and continuous 24-hour, cloudless, daylit world, increasingly free of jarring anomalies, outliers and statistical inconsistency.

(1) Quaranta, Domenico, “Collect the WWWorld. The Artist as Archivist in the Internet Age,” in Domenico Quaranta et al., Collect the WWWorld, exhibition catalogue, LINK Editions, September 2011.

(2) “WebGL Earth Documentation – 2 Real-time Texturing Methods,” WebGL Earth Documentation – 2 Real-time Texturing Methods, N.p., n.d. Web. 30 July 2012.

(3) Jovanovic Weiss, Srdjan and Leong, Sze Tsung, “Escalator,” in Koolhaas et al., Harvard Design School guide to shopping, Köln, New York, Taschen, 2001.

Screenshots from Google Earth, postcards and postcard racks, inkjet on paper, website

2010-Ongoing, http://www.postcards-from-google-earth.com/, CAM, Raleigh, NC, XPO GALLERY, Paris, France, Thomassen Gallery, Gothenburg, Sweden, University at Buffalo, Buffalo, NY, Art Souterrain, Montreal, Canada, swissnex, San Francisco, CA, Lab for Emerging Arts and Performance (LEAP), Berlin, Germany, Wasserman Projects, Birmingham, MI, Phillips auction house, New York, NY, Tin Sheds Gallery, Sydney, Australia, Gallery Wendi Norris, San Francisco, CA, Villa Terrace Decorative Arts Museum, Milwaukee, WI

I collect Google Earth images. I discovered strange moments where the illusion of a seamless representation of the Earth’s surface seems to break down. At first, I thought they were glitches, or errors in the algorithm, but looking closer I realized the situation was actually more interesting — these images are not glitches. They are the absolute logical result of the system. They are an edge condition—an anomaly within the system, a nonstandard, an outlier, even, but not an error. These jarring moments expose how Google Earth works, focusing our attention on the software. They reveal a new model of representation: not through indexical photographs but through automated data collection from a myriad of different sources constantly updated and endlessly combined to create a seamless illusion; Google Earth is a database disguised as a photographic representation. These uncanny images focus our attention on that process itself, and the network of algorithms, computers, storage systems, automated cameras, maps, pilots, engineers, photographers, surveyors and map-makers that generate them.

The 38 texture maps included in Three Digs A Skull were created by photo-modeling software. The maps are used to add photorealistic surface information to web-based 3D models scanned by different users. They are produced by an algorithm and meant to be parsed by modeling software, but not seen by humans. As such, their aesthetic is non-intentional.

Each visitor to tex_archive.com activates a search for a new texture map, which is then presented, archived, and posted to twitter at @tex_archive (where it is cataloged by the Library of Congress). By retrieving these images the texture maps are removed from their normal operation for display to a human viewer. Divorced from the software and 3D model, they no longer function as operative images. The tex_archive records this transformation.

Three Digs A Skull shifts the context again, re-framing the tex_archive as a chance-retrieved inventory of object-images.

tex-archive.com

twitter.com/tex_archive

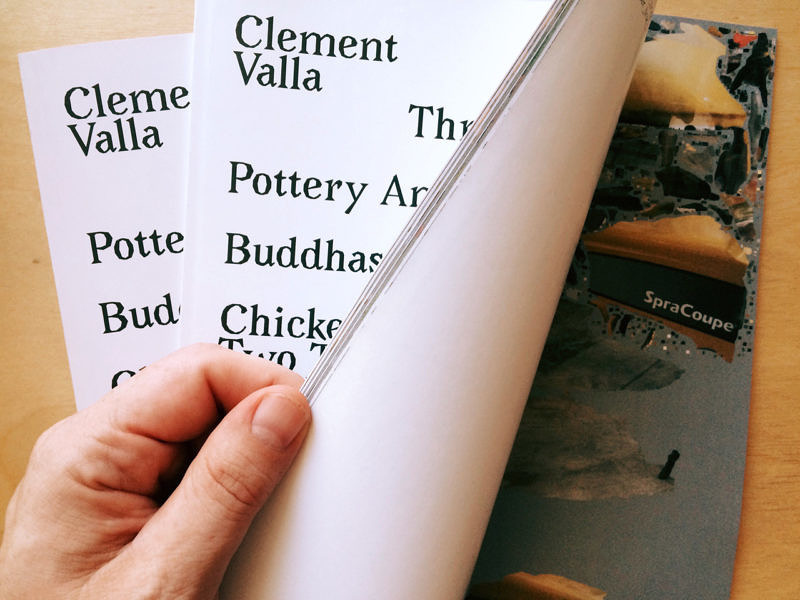

Artist Book, Drawings by 6560 individuals, custom software

2014, RRose Editions and XPO Gallery, Paris France

The drawings in this book were produced online by anonymous workers through Amazon.com’s micro-labor market known as Mechanical Turk. The drawings began with an intitial seed drawing that was copied 8 times. Each of these copies was then copied in turn, and so on, so that all the drawings are copies of copies of copies of the original seed drawing — a huge game of ‘telephone’ played out in a 2-dimensional grid. Each page tracks the distance of the drawings from the original seed.

The workers are culled through the online service of Amazon.com’s Mechanical Turk, a pre-existing system that offers workers pay in reward for specified tasks. For the Seed Drawings, each worker is paid 5¢ to copy the previous worker’s drawing— a paltry sum for a menial task. The Seed Drawings explore two aspects of contemporary networks: the online proliferation of copies and repeated memes, and the spread of cheap, crowdsourced micro-labor.

for more information contact:

RRose Editions

XPO Gallery

Satellite imagery, wooden table, brick, trestle, inkjet on canvas

2014, XPO Gallery, Paris France

The Universal Texture Recreated transforms a flat satellite photograph into a 3D dimensional image, reconstructing images from Google Earth using the low-tech medium of domestic furniture.